Is the transition from GPU to CPU the next phase of the AI bull market?

Trump recently boasted on Truth Social of the US$45 billion he made for the U.S. by investing in Intel.

Source: Truth Social

While the Nasdaq 100 is up 13.32 per cent this calendar year but the gain masks much larger gains for individual artificial intelligence (AI) compute stocks. AMD, for example, is up 96 per cent, Arm Holdings is up 117 per cent, and Intel, a company that most investors wrote off and Trump acquired, is up 206 per cent.

So, what’s going on?

The answer is the advent of agentic AI.

In the past it might have taken an experienced coder (even an experienced Vibe coder) a few days to create an app or a piece of code that automated an online task, such as price comparison, finding a product or asset that met your criteria such as a house or car for sale on a platform like Carsales or realestate.com.au in Australia or TradeMe in NZ or Zillow in the U.S.

Today, these goals can be achieved by a worker created by an AI Agent. We can simply use voice commands to tell the agent what we want the worker to do, and the agent will write the underlying code, check it, execute it, and handle it for us. The worker then acts autonomously, filtering what it finds online based on the criteria set and presenting its results in the format required, daily, weekly or even continuously.

We live in a new world where AI agents have the potential to automate almost anything we ask of them.

Compute

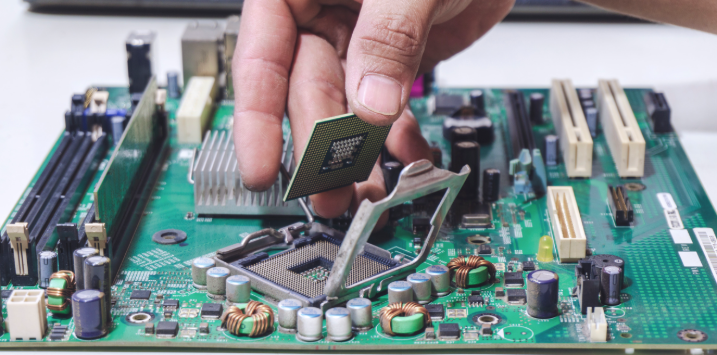

Compute refers to the raw processing power a computer needs to perform tasks, calculate data, and run applications. Think of it as the brain or the engine of a machine. At its core, compute is the action of calculating and solving problems, such as processing a 3D image or running an AI model. Just as a car needs an engine to move, a computer needs compute (CPU or GPU) to turn on, load apps, and process information. A “lot of compute” means the machine can handle complex, fast tasks like video editing or simulation.

Why has Trump made so much money on Intel?

Since ChatGPT was launched in November 2022, requiring massive numbers of Nvidia GPUs to train and serve large language models (LLMs), Nvidia’s stock price has risen 1,199 per cent. But the advent of Agentic AI means that when they are tasked to perform certain functions, such as browsing the web, comparing listings and prices and then preparing spreadsheets or websites and lists and populating them with results, these tasks load the Central Processing Unit (CPU) more than the Graphics Processing Unit (GPU).

The GPU excels at highly parallel tasks like rendering visuals during gameplay, manipulating video data during content creation, and computing results in intensive AI workloads.

The CPU is commonly referred to as the “brain” of the computer. It’s essential to all modern computing systems because it executes the commands and processes required by your computer and operating system. The CPU is also important in determining how fast programs run when performing tasks such as surfing the web, running calculations for game physics, and building spreadsheets.

As we migrate from more reasoning and inference, which is GPU-heavy, to more web scraping, code execution and testing, and multi-agent tasking, the CPU to GPU ratio will change. More CPUs for every GPU. Indeed its already happening. According to Morgan Stanley Research, earlier server builds used 1 CPU for every 12 GPUs.

With the rising agentic workloads, described above, the ratio is now closer to 1 CPU per 2 GPUs.

It’s because of this trend that compute stocks are rallying. In my opinion, it’s possible they have run too hard, as investors tend to overestimate the short term. But it’s also true that investors underestimate the long term, which means the future is promising for these companies.

Why?

Goldman perhaps articulated it best: “agentic use cases are [currently] more hobbyist tinkering with an idea than full-fledged commercial deployments. The moment when (if?) an enterprise agent, which is on 24×7 and can do complex operations, we see an inflection point in token usage. When an agent can run profitably (i.e., the value of the work it does exceeds its token usage), adoption will explode.”

The theory goes that hyperscalers will be in the box seat because the cost to process each token is falling, while the price per token is stabilising, and demand is exploding, so hyperscalers will be able to dramatically improve their margins. That could wipe away concerns about adequate return on investments (ROIs) for the hyperscalers (GOOG, AMZN, META) on the hundreds of billions spent on AI data centres.

As an aside, last week’s earnings from Amazon, Microsoft, Meta, and Google validated the AI super-cycle. These companies also noted they are supply-constrained, demand is even higher, and they could have made more money.

- Amazon – Revenue of $181.5 billion (+17 per cent year-on-year (YoY)), beat by 2.4 per cent | AWS Revenue of $37.6 billion (+28 per cent YoY), fastest growth in 15 quarters.

- Google – Revenue of $109.9 billion (+22 per cent YoY), beat by 2.7 per cent | Cloud Revenue of $20.0 billion (+63 per cent YoY), beat by 8.8 per cent.

- Microsoft – Revenue of $82.9 billion (+18 per cent YoY) | Azure revenue growth of +40 per cent, beat guidance of 37–38 per cent.

- Meta – Revenue of $56.3 billion (+33 per cent YoY), beat by 1.4 per cent | Ad revenue +33 per cent YoY.

Back to the CPU thesis…

According to Delaware based Market Sentiment Inc., if you are looking only for exposure to CPU demand, the four companies with meaningful exposure are:

- AMD – the market leader in data centre CPUs, with the gap expected to widen further with the launch of 2nm Venice chips. AMD should receive a better share of the incremental revenue driven by agentic demand for CPUs.

- Intel– staging a comeback with Xeon 6 processors being adopted by Nvidia and Google as the CPU “head node” in AI infrastructure. Plus, being strategically critical to the U.S. does not hurt.

- Nvidia – has launched its AI CPU, Vera, designed exclusively for AI agents.

- ARM – launched its first-ever data centre processor – AGI CPU focused on handling agentic tasks. Rather than going the raw-power route, ARM is developing a more efficient processor to reduce token costs.